Portfolio Review | February 2026 (+16.17%, +38.58 YTD)

Citrini doomer take review and brief thesis on AAOI included

Opinions are my own and do not represent past, present, and/or future employers. All content is based on public information and independent research. This newsletter is not financial advice, and readers should always do their own research before investing in any security. I am invested in the semiconductor industry. As of the date of this publication, I may hold long or short positions in the securities discussed in this article.

At the end of March, the price of my paid subscriptions will increase by 2x. Readers who subscribe in advance will be grandfathered in at the lower price.

In February, I returned 16.17%, delivering real alpha this time as the market was relatively flat. SMH returned +0.72%, QQQ returned -2.34%.

I added 3 positions and exited one, for 13 positions total. Today I yap about February happenings, provide updates and thoughts on my positions and whether I added or trimmed this month, and discuss the strengths of my key themes.

Outline

Macro View and Positioning

Key Themes

Portfolio Allocation

Positions

Macro View and Positioning

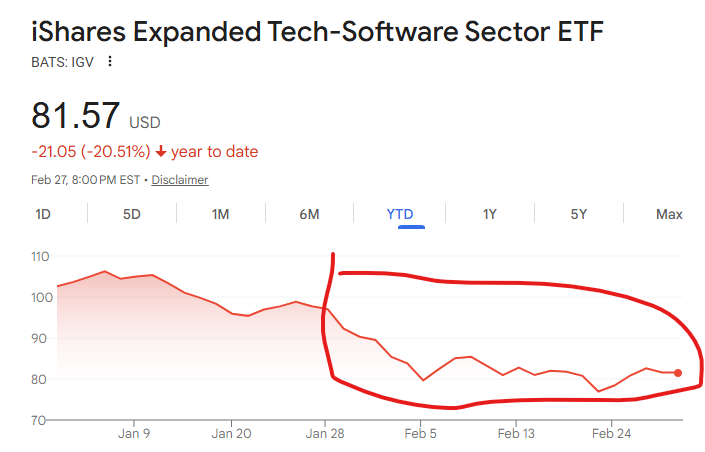

Feb 2026 was the month of SaaSpocalypse and AI-disrupting-everything-fear-selling. Good thing we hide away in the AI infra supply chain and don’t feel any of it.

You would think if we believe AI will replace trillions of dollars of software this means people have completely flipped on the AI bubble narrative and now think AI is worth the capex. But that’s not really the case. SMH is relatively flat.

What’s really been going on is people’s belief in AI as a net-negative for the economy and financial assets. That is the key theme of this month. There’s this niche author called Citrini who wrote a really undiscovered article about it and I think he summarized it well.

What do I think? Well, it depends. If you know anything about economics you may be familiar with the idea of Keynsian economics vs the Real Business Cycle (RBC).

Basically it goes something like the Keynsians are demand-driven and if demand drops, like people don’t have the money to buy stuff or don’t want to buy stuff then businesses won’t invest in the means of production to produce it, resulting in a vicious cycle. The RBC guys are supply-driven and say that if a fundamental input is made cheaper, productivity rises which makes everyone richer, resulting in a virtuous cycle.

Generally the Keynsians are right in the short term but the RBC folks are right in the long term. This is probably true for AI. Because the obvious effects, such as job loss, are immediate, while the hidden effects, like the removal of economically wasteful friction costs, take time.

If you get fired, you feel it immediately. But the cost improvements don’t fully ripple through the supply chain until years later.

I generally lean towards the RBC camp. That’s because we’re guaranteed to reach the higher productivity end state eventually. Whether we get there too abruptly and cause the Keynesian disruption or move slow enough for people to adapt is a matter of chance.

Key Themes

I still structure my portfolio for approximately 3:2:2 exposure to optics:memory:logic (leading edge). Compared to January, I’d say my conviction in optics has increased relative to the other two, and so has my allocation to it.

Optics

February was a pretty crazy month for optics names. We started with the massive Nvidia CPO pull-forwards, causing a 2x rally in LITE, and ended with the absurd guide from AAOI. I think the fundamentals here are only accelerating.

Optics is special because it’s a share gainer. Within a larger compute supercycle. Sometimes it’s really that simple. Crazy.

Memory

My stance here does not change.

Memory Moore’s Law running out — bit growth has to come from capacity expansion.

Agents are much more context intensive than chatbots.

The big 3 have PTSD (Post Traumatic Supply Disorder) from COVID and won’t expand.

HBM yields less bits per wafer than DRAM and thus will continue to cannibalize supply.

Logic

We are seeing a lot more interest around fast decode for agentic inference using SRAM with the interest around Nvidia-Groq and OpenAI-Cerebras. SRAM is memory that is super expensive but super fast.

Agents unlock many new model use cases that don’t fit under the same “query chatbot, get answer in 15 sec” archetype, resulting in need for new hardware to optimize for them. This is where SRAM comes in. One example is using subagents to handle small tasks, which don’t need massive context windows.

Especially since the DRAM-to-SRAM cost ratio has increased significantly.

The thing that is bullish for logic foundry and thus logic semicap is that SRAM is made using standard CMOS transistors in a logic process, not in a separate DRAM process. So it’s made by TSMC/Intel not Samsung/Hynix. Think about this intuitively: You can buy Crucial memory (RIP) externally but your CPU comes with cache.

Portfolio Allocation

Brief take on AAOI included.