Cerebranalysis | Part 1: Dinner Plate Computing

Cerebras Analysis! The Nightmare Compiler, Wafer-Scale Yield, PVT Calibration, Tensor vs Pipeline Parallelism, Memory Constraints, I/O Bandwidth Problem, and The Economically Relevant Conclusion

Welcome to Cerebranalysis!

Over the next two days we shall do an analytical analysis of Cerebras in advance of the IPO. Today’s article focuses on their technology and tomorrow’s will be on stock-related stuff (business, market relevance, and financials).

Introduction to Dinner Plate Computing

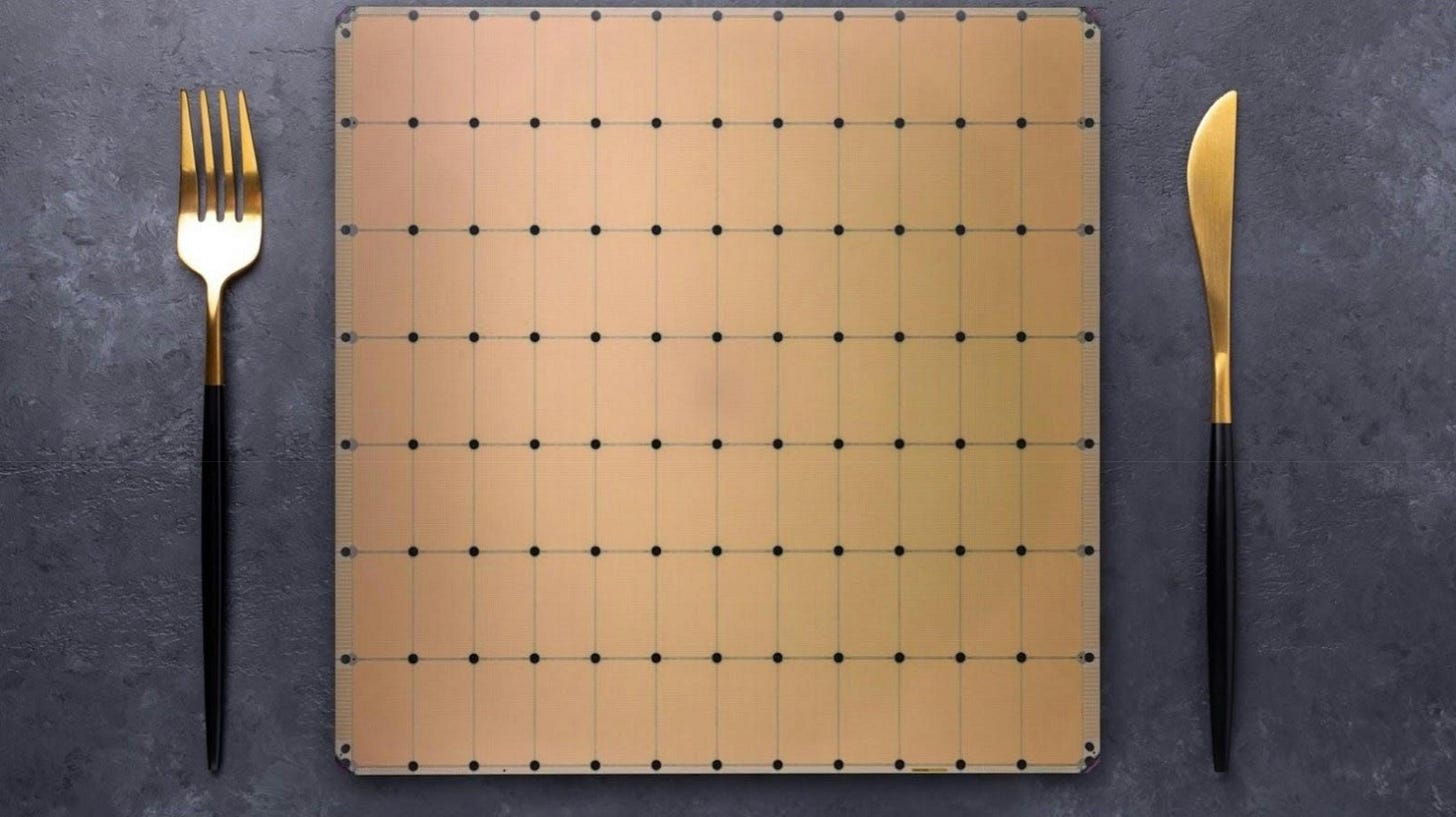

Cerebras is the well-known pioneer of dinner plate computing.

Their foundational premise is that, BIGGER IS BETTER.

Normally, when you order a wafer from TSMC, you slice up your wafer into dies. Cerebras decided to keep all of the dies intact and instead network them together into one really big chip.

These spaces between the dies are called keep-out zones because normally you keep everything out of them because they will be cut up by the diamond saws. In this case, these keep-out zones are the home for complex wiring and networking. This means that Cerebras needed to collaborate intimately with TSMC to develop proprietary IP in order to fill up these keep-out zones with networking.

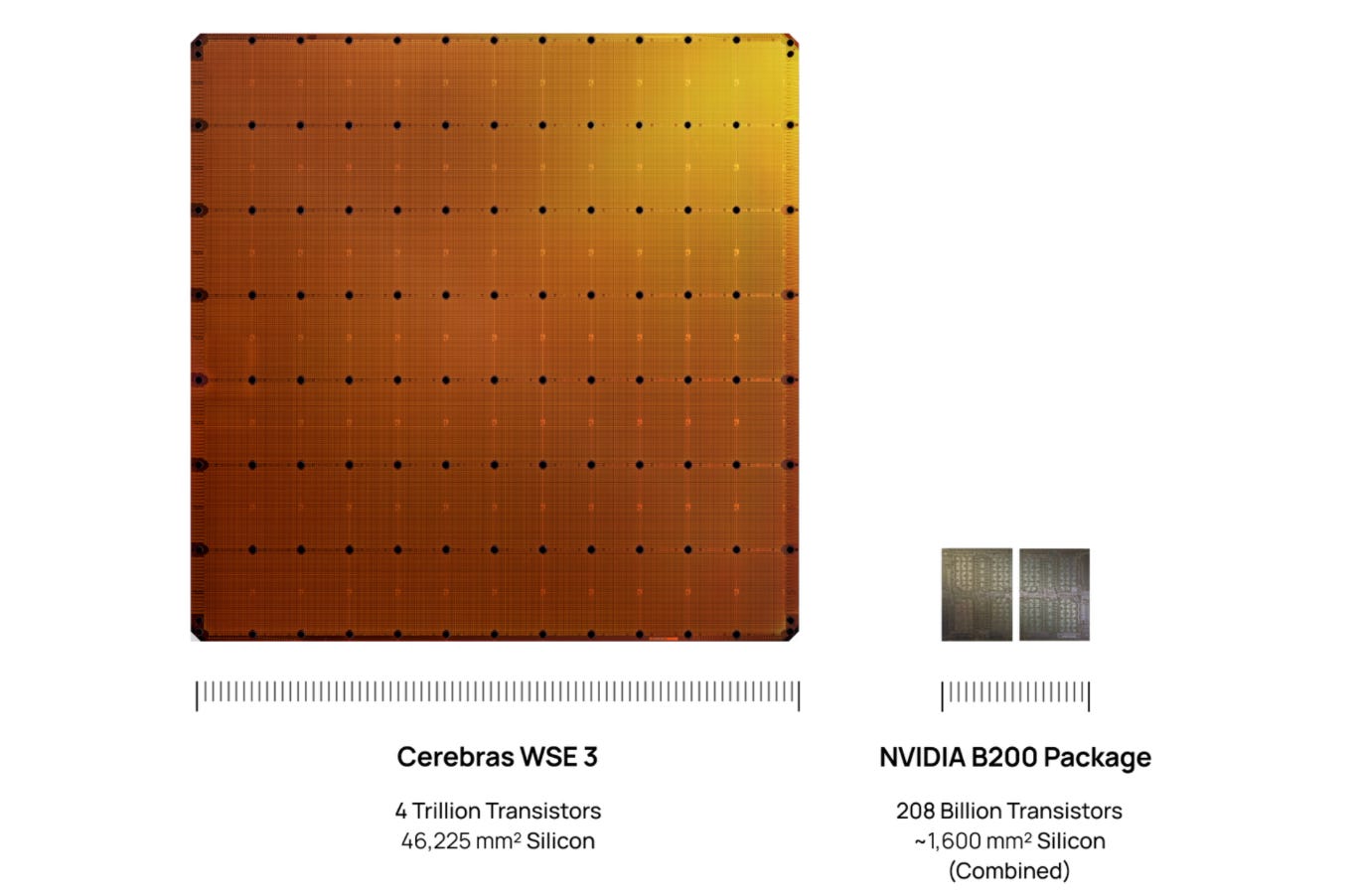

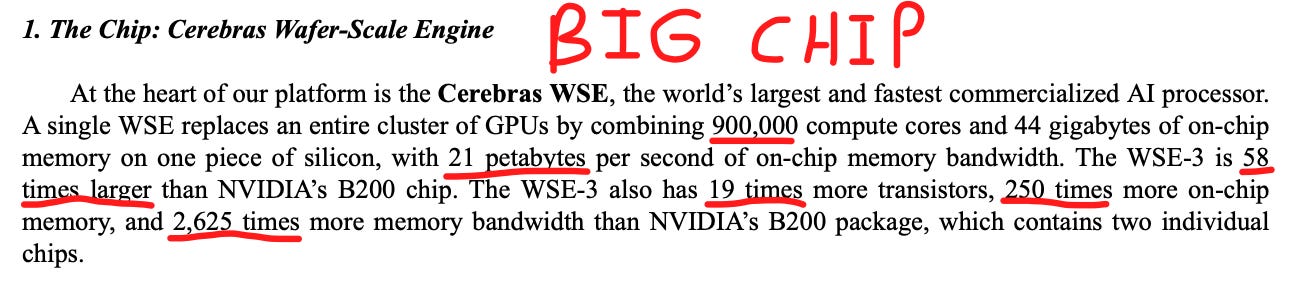

Throughout their investor materials, they make a plethora of comparisons to traditional computing with a bunch of metrics of stuff that chips do (compute cores, transistors, memory bandwidth, FLOPs), and because their chip is bigger, they can do more chip things.

This can mislead retail investors, as in real life there is always a trade off. Cerebras is simply a computing architecture optimized very differently than traditional GPUs.

Abstract

Today’s article focuses on the science and technology behind the Cerebras Wafer-Scale Engine.

First, I discuss the Cerebras distributed SRAM problem, and how this leads to extreme compiler complexity and inefficiency. I make the comparison to NVIDIA’s GB200 clear through an analogy: the tale of two cities.

Next, we find out how Cerebras claims a 100% yield rate on their wafer-scale engines, despite wafers being nearly guaranteed to have a defect.

Then we dissect PVT (process, voltage, temperature) calibration, one of Cerebras’s most important innovations, one that solves a problem which prevented the entire wafer-scale industry from taking off for decades.

After that, we talk about the science behind the one mechanism which enables their 15x inference speedup: pipeline parallelism. We explore how a normal GPU runs inference on a transformer (tensor parallelism), walking you through what happens inside a rack per-token and per-model-layer, before contrasting it with the Cerebras WSE approach (pipeline parallelism) and how data literally flows down the wafers like water through a river.

Following which we detail the two key constraints their architecture has, first being severe memory constraints and second being the limited I/O bandwidth due to very large area relative to perimeter.

And finally, we go one step beyond most other technical research out there. We underline why all this technological analysis is economically relevant for their product. I will walk you through the single conclusion that follows from our research, which forms the foundational premise for all of our economic and business-related analysis coming tomorrow.

Importantly, I make it all fun and understandable.

These two Cerebranalysis articles are a must-read. Get strapped in. This stuff is crazy technical but technical is fun. Let’s go!

Contents

The Tale of Two Cities & The Nightmare Compiler

Wafer-Scale Yield

PVT Calibration

Fast Inference (Tensor vs Pipeline Parallelism)

Memory Constraints

The I/O Bandwidth Problem

The Economically Relevant Conclusion

By accessing this content, you acknowledge and agree to our terms and conditions. This research is not financial advice.